Federal Conflict Erupts Over National Security AI

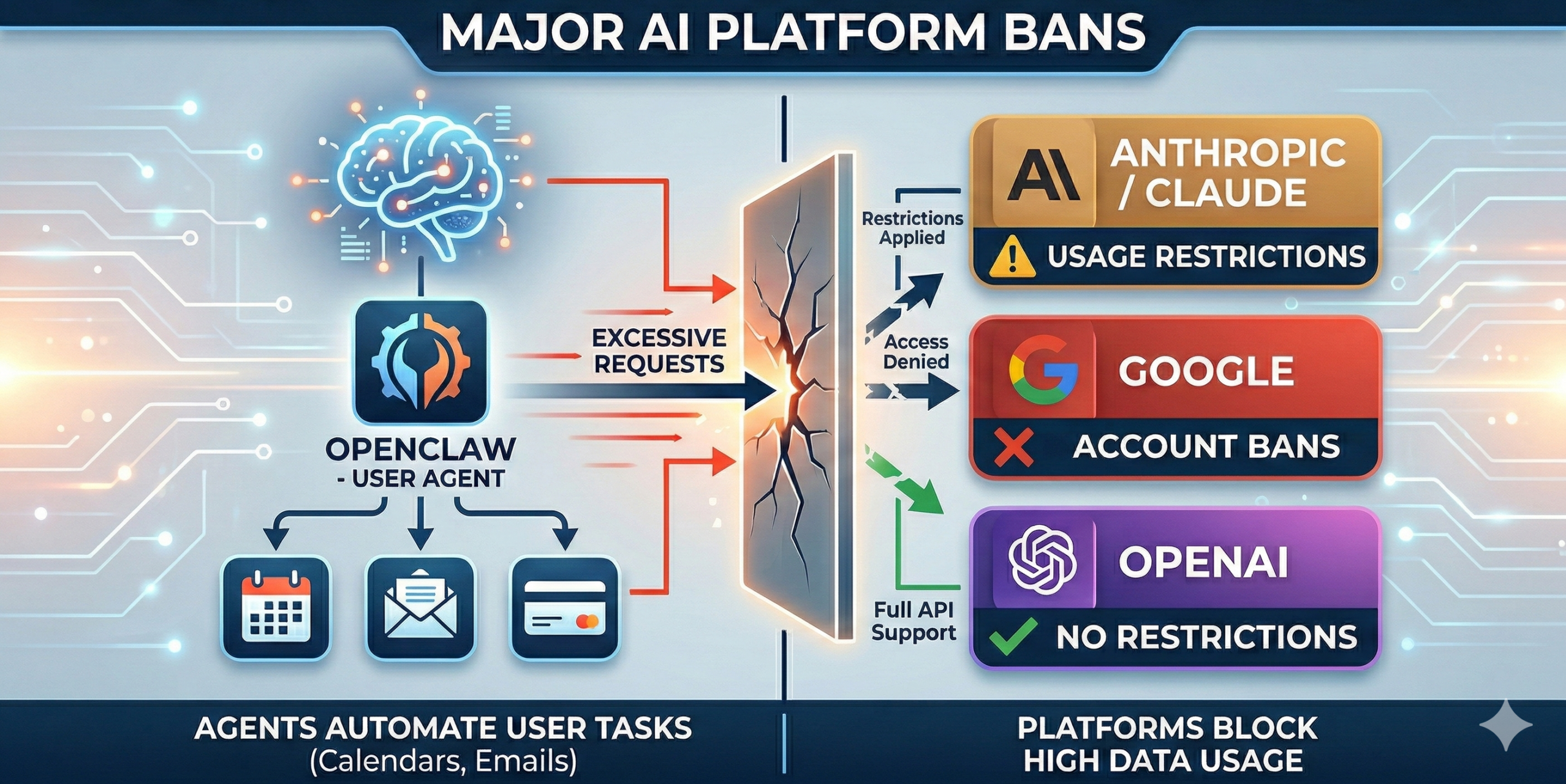

A major national security story broke this week as Anthropic CEO Dario Amodei revealed that his company has been banned from federal government use. The conflict arose after the Defense Department demanded that Anthropic hand over its AI technology, known as Claude, without restrictions for military use.

Anthropic refused, citing safety concerns and “red lines” they would not cross. In response, the federal government has moved to purge the company’s tools from its agencies. This comes at a time when the White House is pushing for a “National Security AI Directive” to ensure the military has unrestricted access to the most powerful AI models.

This clash highlights the growing tension between the private companies that build AI and the government agencies that want to use it for defense and intelligence purposes. It raises fundamental questions about who controls the “brain” of an AI system when national security is on the line.

Why this matters for The Knoxville AI Hub

For Knoxville area residents, especially those working in the defense corridor between Oak Ridge and Maryville, this highlights the high stakes of AI safety. It shows that “AI Ethics” isn’t just a classroom topic- it has real consequences for government contracts and national security. For small businesses that use tools like Claude, this serves as a reminder that the AI tools we rely on are subject to sudden shifts in federal policy and legal battles.

For more detailed information, you can read the full story here: https://www.cbsnews.com/news/ai-executive-dario-amodei-on-the-red-lines-anthropic-would-not-cross/